Overview

University websites are full of information, but rarely in a way that actually helps students.

During my internship at Zhejiang University, I worked on building a chatbot that could turn years of scattered website content into something people could ask questions to.

What started as a chatbot project quickly became something else. The real challenge was not generating answers. It was figuring out how to make messy, unreliable data usable in the first place.

The problem

Students were not lacking information. They were struggling to find it.

The university website had built up content over decades. Different departments structured pages differently. Some information was duplicated. Some was outdated. Some links did not lead anywhere anymore.

Even simple questions could take several clicks to answer.

Once I introduced AI into the system, a deeper issue became clear. If the data is inconsistent, the answers will be inconsistent too. The chatbot was only as reliable as what it could retrieve.

My approach

I started treating the problem as a system, not just a chat interface.

Turning raw data into something usable.

I scraped large portions of the university website, which meant working through years of accumulated content.

This was not a quick step. It took time just to collect everything, and even more time to clean it.

I had to deal with duplicated entries, inconsistent formatting, broken links, and content that was no longer relevant. Cleaning and restructuring the data became one of the most important parts of the project.

The goal was to create a knowledge base that the model could actually reason over.

Improving how the system retrieves information.

The hardest part of the project was retrieval.

A lot of university content overlaps. The same type of event happens every year. Policies get updated but older versions still exist. Different departments use similar language for different things.

Because of this, the chatbot often pulled the wrong information unless the user was extremely precise. Even then, it could still fail.

I spent a lot of time refining how information was grouped and how prompts guided the model. I tested different ways of structuring context so the model could better distinguish between similar topics.

This was less about making the model smarter and more about making the system clearer.

Making answers easier to use.

Even when the chatbot retrieved the right information, the response still needed to be usable.

I focused on making answers feel direct and easy to act on. That meant cutting unnecessary detail and keeping responses grounded in what the user actually asked.

The goal was not just correctness. It was helping someone get what they needed without extra effort.

Key challenge

One thing I had to accept was that the system would not always be perfect.

Some topics were too similar. Some queries were too vague. Sometimes the chatbot still returned the wrong answer.

That forced me to shift how I thought about success. Instead of aiming for perfect accuracy, I focused on making the system more consistent and reducing obvious failure cases.

I also paid attention to how the system behaved when it was uncertain, not just when it was correct.

What I built

I built both the data side and the interaction side of the system.

On the backend, I scraped and cleaned large-scale website data and structured it into a usable knowledge base.

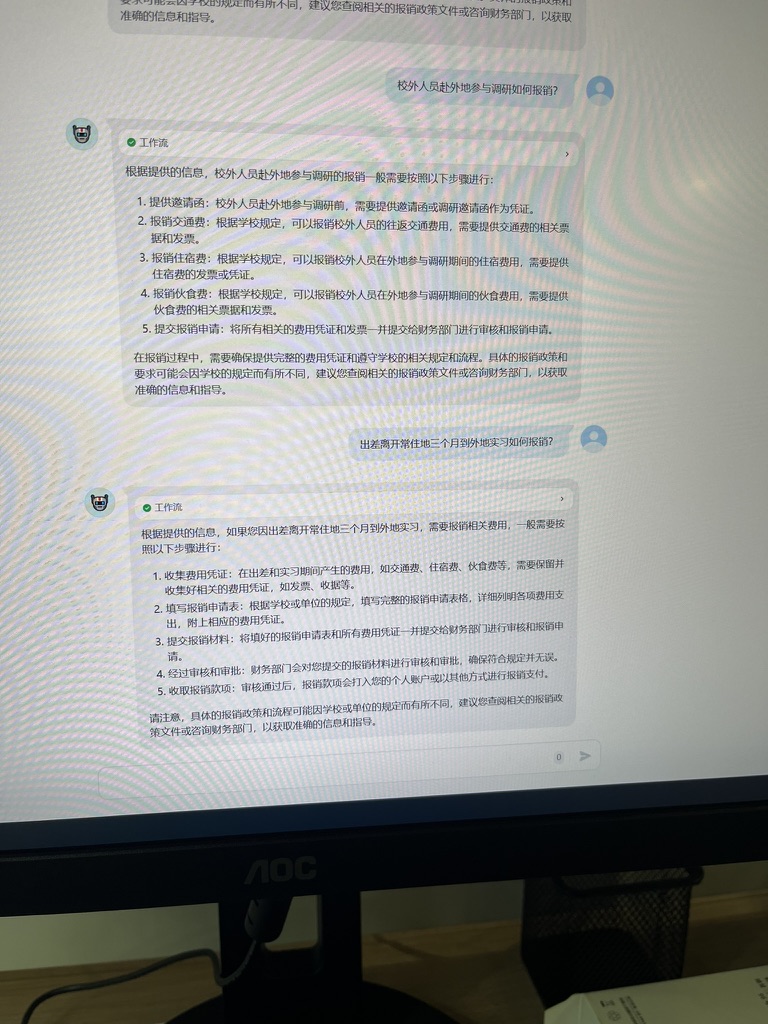

On the front end, I designed a chat interface that allowed students to ask questions and receive responses grounded in that data.

I also developed prompt logic to guide how the system responded and to reduce retrieval errors.

Impact

Students could find answers faster without navigating multiple pages.

The system made institutional information easier to access, especially for common questions.

It also showed how AI can be useful in a very practical way, not by adding more information, but by organizing what already exists.

Reflection

This project changed how I think about AI.

The hardest part was not the model. It was the data behind it.

I learned that more data does not always help. Better structure matters more. I also saw how easily AI systems can break when context is unclear.

Designing with AI means designing for uncertainty. You have to think about what happens when the system gets it wrong, not just when it works.